There are different kind of polls evolved throughout history:

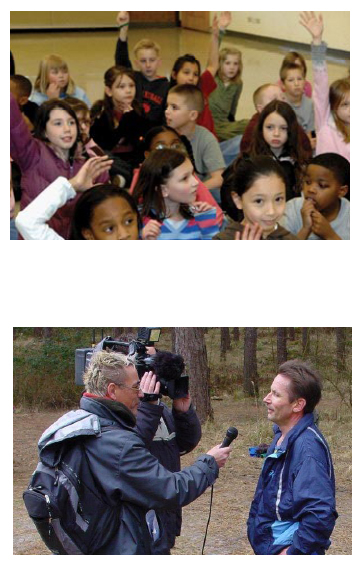

• One example is the straw poll, the kind of election polls

(first 1824) used in the nineteenth century in the US that the

modern day political polling is originated from. These polls varied

from oral counts, hand raising, paper ballots, writing preferences

in a book. Already then existed the problem of ‘selection bias’;

the fact that the polls are influenced by the characteristics of

those who were able to participate; different from the electorate

or population to which the results would be generalized.

• ‘Scientific Polls’ are other examples of polling, with Gallup

(around 1940) recognized for one of the early versions, that

involved sending interviewers to randomly selected locations and

then allowed interviewers largely to select their respondents to

fill ‘quotas’ based on population characteristics to be represented

in the poll. This, of course, led to selection biases.

• Academic researchers, who were beginning to engage in

large-scale public opinion and voting studies at this time, engaged

in a concerted and continuing effort to improve and defend their

research. This can be seen as a third example, academic research.

<Shapiro, Polling, 2001 Elsevier Science Ltd.>

//‘Political polling and market and academic survey research

increased after 1948. The early

and subsequent public pollsters who provided their survey results to

the mass media and other subscribers had used their political polling

as advertising to generate business for their proprietary market

and other types of research. Market researchers and other pollsters

expanded their work into polling and consulting for political parties

and candidates. Polling experienced its greatest expansion beginning

in the 1970s, when it was found that sufficiently representative and

accurate polling could be done by telephone (based on experiments

comparing polls conducted by phone with those done in-person),

eventually leading all the major pollsters to do most of their polling

by phone. The most important national news media, which had

relied exclusively on polling by public pollsters, began sponsoring and

conducting their own polls, establishing their own, often joint, polling

operations or making arrangements to contract-out polls in which

they controlled the content and reporting of results’ .//

Polls can be conducted in different ways depending on the research

goal, the kind of survey questionnaire to be administered, and the

available resources (money and time). Most time-consuming and

expensive is in-person national polling (going to random homes

throughout the nation). This can give in-depth, visual and sensitive

information. In contrast, election ‘exit polls’ that are used to

project election results and provide the basis for more in-depth

statistical analysis of voters’ behavior, are short and efficient surveys,

based on random samples of voters exiting voting places.

Exit polling has occasionally been controversial due to the ‘early’

reporting of results that might affect the behavior of those who

vote later in the day.

Also telephone polling (typically using Random Digit Dialing,

RDD) is less costly as it eliminates travel costs and is easier to

monitor and validate than in-person

interviews; however, it has higher rates of non-response due to

households that are not reached (no telephone, no answers, busy

signals, answering machines, and refusals to participate). Mail

surveys are the least expensive but produce the lowest response

rates unless extensive follow-ups are made.

Beyond statistical sampling error and non-response bias, there

are other sources of error in surveys that are not easily quantified.

- The responses to survey questions and measurement of

opinions and behavior can be affected by:

o how questions are worded

o what response categories are offered

o whether questions should have fixed or forced choices

o whether questions should be asked and responses recorded

as open-ended questions

- There may be ‘context effects’ that are produced by the

order in which questions are asked.

- A major source of error can occur depending on how research

problems are formulated or specified.

- Care needs to be exercised in drawing inferences about

actual behavior from survey measures of opinion, and self-reports

of future (and past) behavior.